In response to the Medicines and Healthcare products Regulatory Agency (MHRA) call for evidence on AI regulation, EBO supported The Patients Association in a recent study exploring how patients feel about the use of AI in healthcare.

Following the survey, Richard Samuel and Trudy Kerr sat down with Rachel Power, CEO of the Patients Association, on the latest episode of EBO’s Digital Dose podcast to explore the key findings in more detail and discuss what they mean for the future of AI in the NHS.

Bridging the Transparency Gap

A key insight from the research was that patients are generally open to the use of AI in the NHS, but want far greater transparency, accountability, and stronger regulation.

Commenting on this, Rachel explained that the survey brought this into sharp focus:

49% of patients believed AI had been used in their care, yet only 10% had been told.

This gap between assumption and disclosure is where trust can begin to break down.

She explained that patients are not resistant to innovation, but they do need to understand when and how AI is being used, stressing that this information should not be hidden in technical detail or “small print,” but communicated in plain, accessible language.

The findings highlight the clear, practical steps that can be taken now to build and maintain patient confidence, recognising that without trust, innovation cannot succeed.

3 Practical Steps to Build Patient Trust

1. Clear patient information about AI

Patients need to be told, clearly and in plain English, when AI is involved in their care. Not in the small print, not hidden in policy documents but openly, upfront, and in a way that actually explains what it is doing and why.

2. Accountability when things go wrong

There must be no ambiguity about who is responsible when things go wrong. Patients want clarity, not confusion, a clear human answer, not a system that feels distant or unclear. Accountability needs to be visible and real, not just written down in principle.

3. Ongoing oversight and transparency in live systems

Transparency cannot stop at the point of introduction. Patients want continuous visibility into how AI is being used in real services, and reassurance that it is actively monitored. They want to know who is watching, how it is being overseen, and that there is always a human at the wheel.

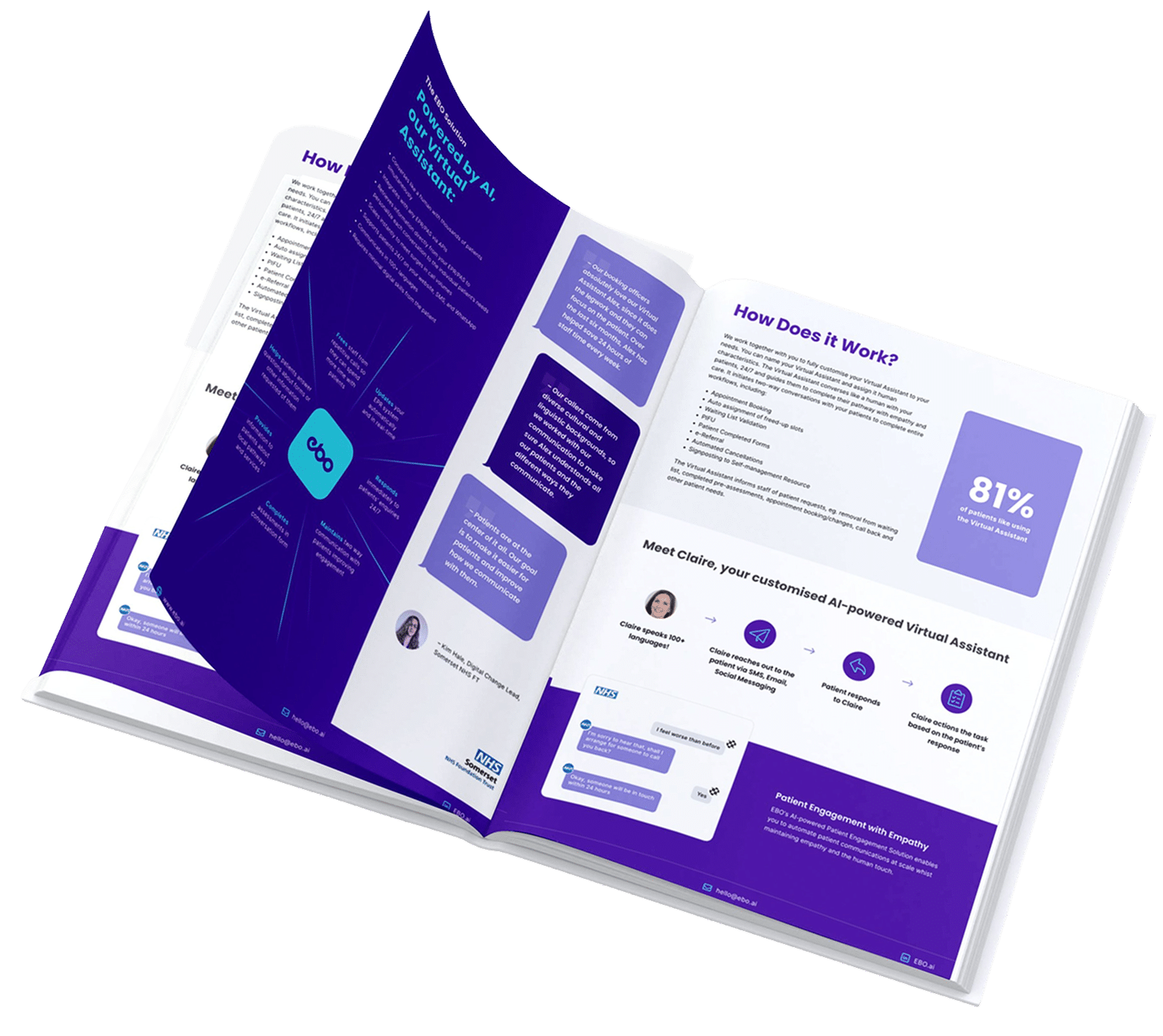

Access our Healthcare Guide today!

- Redefine Patient Engagement

- Build Meaningful Relationships

- Enhance Patient Access

Designing with patients, not just for them

While patient involvement is often discussed across the AI lifecycle, according to Rachel, its most critical impact is at the design stage.

It is at this point that assumptions are made, systems are structured, and workflows are defined. If patients are not included early, there is a risk that bias, exclusion, or inefficiency becomes embedded long before systems are deployed.

Patient involvement, however, should not be treated as a one-off consultation exercise. Instead, it should be structured, ongoing, and representative, with the ability to genuinely influence decisions. Patients and frontline staff often identify risks earlier than technical systems, making their role in continuous monitoring equally important.

Giving Patients their Voice Back

A further point raised in the findings is the importance of ensuring AI supports existing health inequalities.

Patients with lower digital literacy, those from marginalised communities, and individuals with more complex or non-standard healthcare needs may be more affected if systems are not designed with inclusion in mind.

Patients also have an important role to play in this design process. Involving them in design and ongoing monitoring can help identify what the data may be missing.

Patients must be involved in the design process to ensure we avoid blind spots. They also need to be included in ongoing monitoring so they can help identify what the data may be missing.

ChatGPT and the New Reality of AI in Healthcare

Richard points out that tools like ChatGPT, now the fastest-growing consumer technology in history, are already being used by people to understand and manage their health, well outside formal healthcare systems. While this reflects a major shift in how citizens access information, it also raises a major concern, since these general-purpose AI models are not clinically governed or safety-assured for medical use.

From an EBO standpoint, Richard suggests building healthcare AI systems with safety, governance, and continuous oversight embedded from the start.

This involves real-time monitoring of how users interact with the system ( tracking behaviour, drop-off points, and escalation patterns) to ensure it is functioning safely and as intended.

These feedback loops are essential for ongoing refinement and control, reflecting a continuous process of assurance rather than a one-off deployment, similar to how clinical interventions are actively monitored over time.

He also emphasises a clear “human in the loop” approach, where EBO’s Virtual Assistants are used for initial engagement but are designed to detect signals such as anxiety, confusion, or distress and escalate immediately to a clinician.

In this model, AI plays a supporting role in access and triage, but does not operate independently in higher-risk situations, with human oversight reintroduced whenever uncertainty or potential risk is identified.

What the NHS of Tomorrow Means for Patients Today

When asked what a well-designed, patient-centred AI system in the NHS should look like, Rachel highlighted both a clear opportunity and a growing expectation from patients to be more meaningfully involved in how AI is developed and used.

While 76% of patients say involvement in AI-related decisions is important, the findings also show there is still work to do to strengthen confidence in how regulation is experienced in practice.

For Rachel, this presents an opportunity to further embed patient voice into the development of healthcare AI, in line with ambitions set out in the NHS 10-year health plan. This includes:

√ more structured patient input

√ clearer communication in plain language

√ and stronger visibility of how AI systems support care

She also emphasised that patients, families, and frontline staff are often closest to real-world care delivery, meaning their insights can be invaluable in shaping systems that work effectively in practice as well as in design.

At EBO, we share this view and work closely with clinicians and operational teams to ensure AI is designed in a way that is practical, transparent, and grounded in everyday healthcare needs.

By collaborating with those delivering care, we help ensure technology supports clinical workflows, enhances efficiency, and keeps the patient experience at the centre.

→ Download our AI guide to find out more about our healthcare digital solutions.